Tuesday, December 22, 2009

Monday, December 21, 2009

Wednesday, December 16, 2009

Class Project

Here is the PPM project I have been working on. The paper may not be high quality since I had to rush to get it submitted on time. However, I am pretty excited about some of the topics it covers and the algorithm it introduces. I learned a surprising amount about information theory when researching the topic. I plan on continuing developing the algorithm and applying it to new domains.

Monday, December 14, 2009

Sunday, December 13, 2009

NIPS

Earlier this week I had a great time at the NIPS conference. I got to meet several famous AI researchers. The best part of the conference was the poster/demo sessions. My favourite posters were related to Hinton's Restricted Boltzmann Machines. I also got a chance to talk with some people from Numenta about HTM. Google and Microsoft Research both actively participated at the conference. Google had an interesting booth unveiling research they had done in using quantum computing for image search.

Disclaimer for the following paragraph: I know nothing about quantum computing and have a bad memory, so this information is not reliable. When I walked up to the booth I was very sceptical since as far as I know quantum computers are still theoretical. From what I understood they used adiabatic quantum computers to solve an optimization task. I think introducing the image search problem is misleading because any improvement in the image search is not necessarily due to the quantum computer. They should have introduced their image search algorithm and quantum optimization algorithm completely independently of each other. I would only be impressed if the quantum computer was solving an optimization problem faster than what can currently be done on a classical computer. I asked the Google guy the following question: "I remember seeing a proof that a certain type of adiabatic quantum computer could be simulated on a classical computer just as efficiently using simulated annealing. Any comments?" His response seemed to indicate that he thought that the quantum algorithm they were using could solve problems in polynomial time which could not be done on a classical computer. In addition, he gave a cool explanation of how the quantum computer did the optimization by simulating a particle in the search space and allowing the particle to travel through walls so that it doesn't get stuck in local minima. Also, the search space is slowly changed from an easy-to-solve "bowl" to the actual objective function so that the particle converges on the global minima. The algorithm supposedly needs to be run several times since it is stochastic and doesn't necessarily find the global minima every time.

Disclaimer for the following paragraph: I know nothing about quantum computing and have a bad memory, so this information is not reliable. When I walked up to the booth I was very sceptical since as far as I know quantum computers are still theoretical. From what I understood they used adiabatic quantum computers to solve an optimization task. I think introducing the image search problem is misleading because any improvement in the image search is not necessarily due to the quantum computer. They should have introduced their image search algorithm and quantum optimization algorithm completely independently of each other. I would only be impressed if the quantum computer was solving an optimization problem faster than what can currently be done on a classical computer. I asked the Google guy the following question: "I remember seeing a proof that a certain type of adiabatic quantum computer could be simulated on a classical computer just as efficiently using simulated annealing. Any comments?" His response seemed to indicate that he thought that the quantum algorithm they were using could solve problems in polynomial time which could not be done on a classical computer. In addition, he gave a cool explanation of how the quantum computer did the optimization by simulating a particle in the search space and allowing the particle to travel through walls so that it doesn't get stuck in local minima. Also, the search space is slowly changed from an easy-to-solve "bowl" to the actual objective function so that the particle converges on the global minima. The algorithm supposedly needs to be run several times since it is stochastic and doesn't necessarily find the global minima every time.

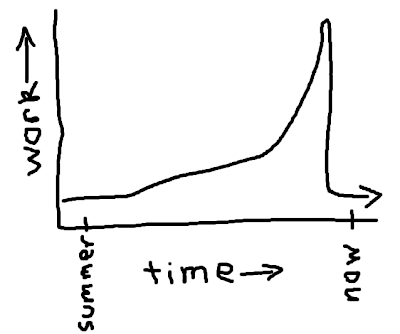

Prediction by Partial Matching

I am currently working on a project for a natural language processing course. Given my interest in temporal pattern recognition, I decided to make the project about text prediction. For the last few weeks I have been really excited because I thought I discovered an innovative algorithm which was doing extremely well compared to existing methods. In particular, it completely outperforms my previous approaches using neural networks/HTM when it comes to almost any pattern recognition task. However, today I was disappointed to learn that almost exactly the same algorithm was discovered back in the 80s. It is a data compression algorithm called prediction by partial matching (PPM). PPM happens to be one of the best text compression algorithms, so at least its nice to know that I independently discovered it. However, I would have been much happier if I had been the first to do so.

Tuesday, December 01, 2009

Hockey Bears

I've done some online research on these bears, and as far as I can tell they appear to be genuine. [YouTube link]

Subscribe to:

Posts (Atom)